Children and the Data Cycle: Rights and Ethics in a Big Data World

"With increasing collection of big data and a vocal data science movement calling for more open data and greater utilization of big data within public, private and not-for-profit policymaking arenas, ensuring the protection of and respect for children rights is becoming increasingly challenging."

This paper from the United Nations Children's Fund (UNICEF) Office of Research - Innocenti shows why child rights need to be firmly integrated onto the agendas of global debates about ethics and data science. As is argued here, in an era of increasing dependence on data science and big data, the voices of children, who constitute one in three global internet users, and those who advocate on their behalf have been largely absent. The authors call for a much stronger appreciation of the links between child rights, ethics, and data science disciplines and for enhanced discourse between stakeholders in the data chain (including data generators, collectors, analysts, and end-users) and those responsible for upholding the rights of children, globally.

UNICEF has developed a mandatory cross-organisational procedure on ethical evidence generation (UNICEF, 2015), underpinned by a belief that ethical principles and a rights-based approach are not only relevant in research, but are equally important within all forms of data collection, analysis, and evaluation involving human subjects or sensitive secondary data. This procedure outlines explicit guidelines for data collection, which includes reflection on issues pertaining to data privacy, the rights of children to be consulted on issues which affect them, informed consent, security, and confidentiality. The point being made in this Innocenti paper is that fundamental questions need to be raised as to how best translate universal principles regarding the rights of the child and traditional ethical frameworks for offline data collection, analysis, and regulation into an online environment.

This paper refers to Canavillas' (2016) definition of big data, which includes "the use of predictive analytics, user behavior analytics, or certain other advanced data analytics methods that attempt to extract value from data." While adopting this definition, the paper also recognises that big data is not solely a technological phenomenon; it has cultural and social dimensions relating to expectations of its applicability, robustness, accuracy, and objectivity, across multiple domains – from education to justice systems. Analysis of big data frequently does not occur within the confines of research institutions; it is consequently not necessarily bound by human subject protections. Furthermore, big data is frequently collected by both public and private organisations, and is therefore subject to multiple and varying international and state-based interventions and standards. Analysis may be undertaken by people who may not be child rights experts nor trained researchers familiar with the concept of ethical standards, and may not be bound by notions of the "best interest of the child" as required by Article 3 of the 1989 Convention on the Rights of the Child (CRC).

The authors note that there are a number of defining features of children and their lives that work interactively to imply that data science needs to explicitly consider its ethical implications for children. Uncertainty regarding the impacts of self-rendered and externally imposed digital identities on the life-long consequences and life choices of children renders any assessment of potential harm and benefits in the best interest of the child difficult, if not impossible, in the face of: unknown future applications of data; children's and parents' understanding of the implications and applications of their data with the attendant implications for self-management of their digital identities; and the insufficiency of traditional informed consent and assent processes, given the nature of the data collected from the internet, as well as the frequent opacity of the ages of data providers. Big data may potentially silence the voice of the child by encouraging the use of data and analytics rather than dialogue and engagement to ascertain perspectives, preferences, attitudes, and competencies, which is in direct contravention of Article 12 of the CRC. Other issues explored here include: digital identities and impacts over the life course; children's understanding; informed consent; the persistence of data; data anonymisation; unknown future applications and use; and fragmented systems of data collection, analysis, and use and lack of peer review and audits.

The paper provides a few examples to highlight some of the ways in which the confluence of technologies and analytics may positively impact on children's protection, their development, wellbeing, participation, inclusion, and access to services. These potential benefits must be considered alongside the potential use of big data for purposes other than the health and wellbeing of data providers and their communities; for example, in the context of the potential for repurposing of data for less humanitarian ends, and, in circumstances where uncritical application of algorithms and use of technologies to collect this data may result in unrepresentative or inequitable outcomes. Several examples are provided of the ways in which the capture and use of big data raises significant concerns relating not only to privacy and loss of control of personal data, but also to the potential for direct or inadvertent discrimination and profiling, for instance. Furthermore: "The persistence of data and its unknown future applications highlight the limitations of particular ethical and regulatory frameworks in the protection of children's data."

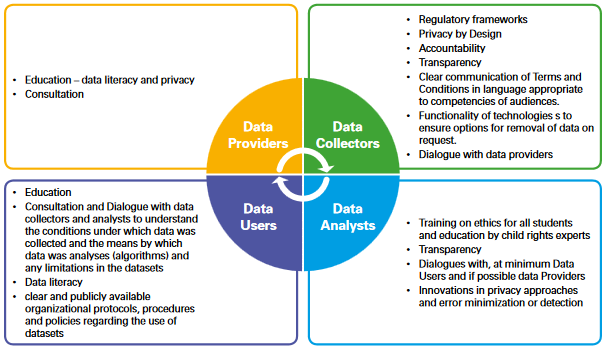

Thus, the authors argue, "[t]he big data world requires an explicit focus on child rights and data science, both as a separate discourse and as part of broader discussions on ethical and legal frameworks for big data collection, analysis and use. This discourse needs to take place within a system of multiple actors, including data producers (children and parents), collectors, analysts, end-users and child rights advocates, reflecting on multiple approaches to support both individual agency and societal accountability. Within this system of multiple actors, new forms of accountability and concepts of privacy and consent are required - together with better education for all stakeholders, better regulatory systems that specifically address concerns related to children's data, and better dialogue between stakeholders." The following section in the paper provides some considerations on possible mechanisms to support children's rights at all stages of the data chain by the various stakeholders. For example, at the level of the data user, it is noted that, within government departments, multilateral and bilateral agencies, non-governmental organisations (NGOs), and corporations, basic data-literacy skills must be enhanced to raise awareness of the value and the limitations of data, platforms, and algorithms.

What is needed, then, are regulatory frameworks informed by global best practice and stakeholder consultation. "Within these frameworks, there is a need to explicitly require increased transparency, accountability with explicit identification of the risks, the harms and the benefits associated with big data use. These frameworks must institutionalize the imperative to consider a range of methods to ensure privacy - for instance limiting, wherever possible, the personal data sought from children and encouraging the development and use of privacy enhanced technologies and anonymization techniques. Furthermore, the notion of voluntariness needs to be translated into the digital world, so that children and their families can easily withdraw from ongoing data collection and sharing processes. At the same time, greater reflection is required on the rights of children and adolescents, their right to express themselves and to be heard and importantly their right to privacy and confidentiality - including from their parents as well as from more traditional players in the data cycle. Undoubtedly, consistent international cooperation and guidance in the development of these frameworks and standards is needed to provide clarity on joint adoption and enforcement of applicable rules, standards and best practices..."

UNICEF Office of Research - Innocenti website, June 27 2017.

- Log in to post comments